Pioneering Accessible AI Research.

We are decentralizing intelligence by focusing on offline models and small language models (SLMs) that empower individuals and local institutions, reducing dependency on big tech infrastructure.

Research Pillars

Offline-First Architectures

Developing models that operate entirely without internet connectivity, ensuring data sovereignty and reliability in remote environments.

Learn More arrow_forwardSmall Language Models

Optimizing massive LLMs into agile, highly efficient Small Language Models (SLMs) that run on local consumer hardware.

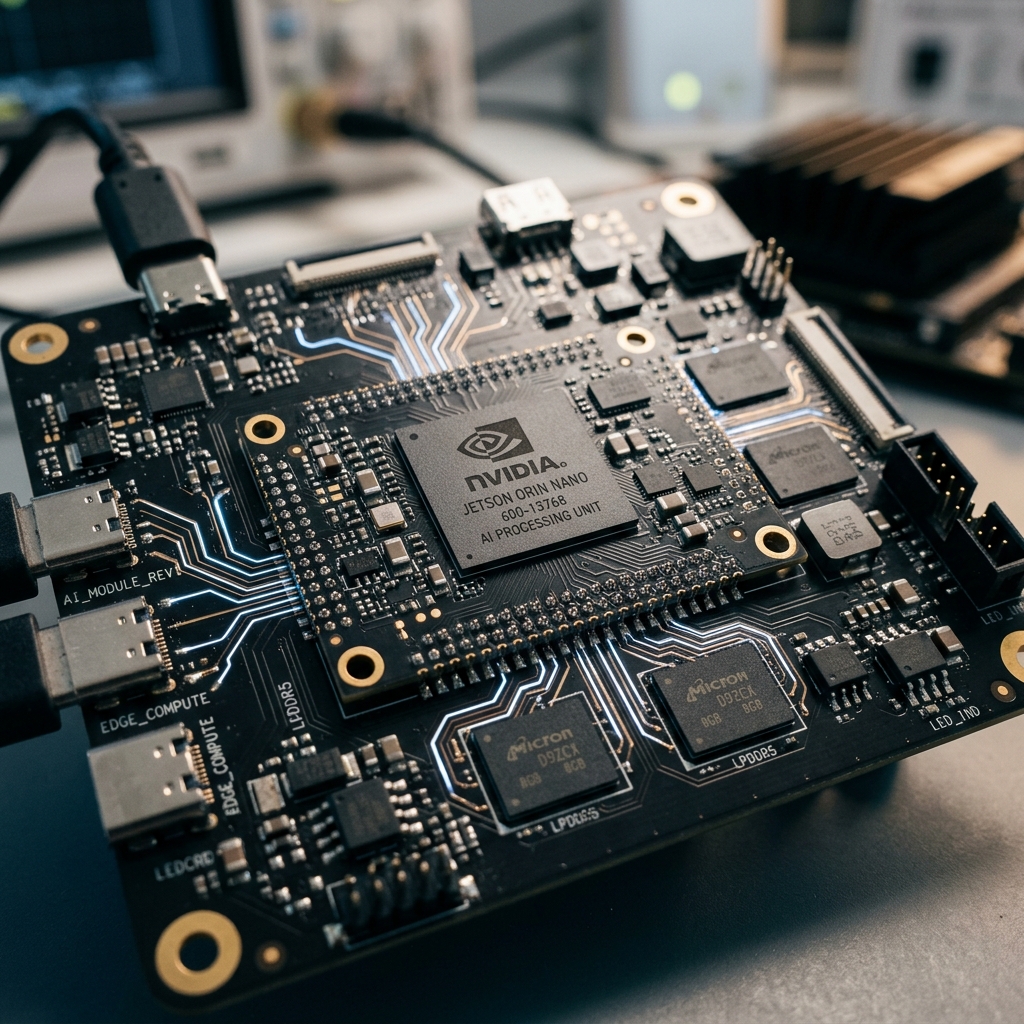

Embedded AI

Our research bridges the gap between software optimization and hardware constraints, tailoring AI for the next generation of RISC-V and ARM edge devices.

AI Optimization

Advancing techniques like quantization, knowledge distillation, and efficient fine-tuning to run powerful models on resource-constrained devices without sacrificing accuracy.

Rigorous Inquiry, Real Impact

Our multidisciplinary team combines academic depth with pragmatic engineering to solve the AI accessibility gap.

Quantization Strategies for Edge LLMs

Evaluating 4-bit and 2-bit quantization on low-power ARM architectures for real-time natural language processing tasks.

Download PDF download

Decentralized Intelligence & Data Privacy

A study on how local model deployment fundamentally shifts the power dynamic between users and monolithic tech corporations.

Download PDF download

Democratizing AI Literacy through SLMs

How affordable, offline models are transforming educational outcomes in bandwidth-limited regions around the globe.

Download PDF downloadBecome a Research Associate

Join our multidisciplinary team of scientists and engineers. Apply to work on cutting-edge offline models, embedded AI, and ethical tech.